Automated Tagging of Containers using AWS Lambda Function

By Axat Shah / Jun 27, 2023

Introduction:

Tagging plays a crucial role in effective AWS resource management and organization. By assigning meaningful metadata to resources, tagging enables easy identification, categorization, and tracking of assets within an AWS environment. However, manual tagging can lead to problems such as inconsistencies, errors, and time-consuming processes. These challenges can hinder resource visibility, complicate cost allocation, and impede efficient management. Fortunately, automating tagging using AWS Lambda Function offers a solution. By leveraging the power of automation, this tutorial demonstrates how AWS Lambda Function can streamline the tagging process, ensure consistent and accurate tagging practices, and ultimately create a more efficient and well-organized AWS environment.

Services used:

- Amazon CloudTrail – To capture and log API activity and events within the AWS environment.

- Amazon CloudWatch Event – To trigger the AWS Lambda function and initiate the automated tagging process based on predefined rules and event patterns within the AWS environment.

- AWS IAM – To define and manage the necessary permissions and access controls for the AWS Lambda function.

- AWS Lambda - To execute the automated tagging process.

- Amazon ECS & EKS – In this tutorial, we will focus on testing the automated tagging process for ECS & EKS. We will code the AWS Lambda function to align with ECS & EKS resources and demonstrate how automated tagging can be applied specifically to ECS & EKS resources at the time of creation.

Workflow Diagram:

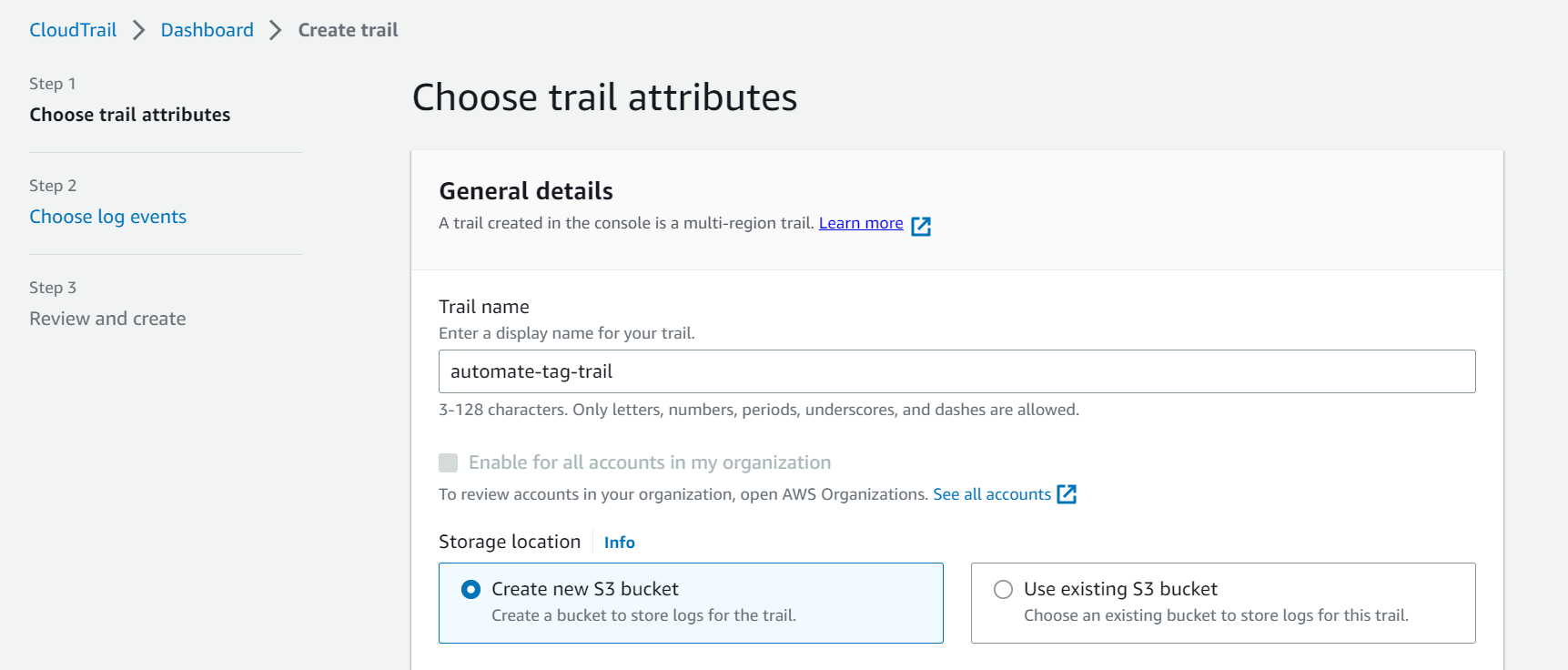

Step-1: Creating a Multi-regional CloudTrail trail

- If you already have a multi-regional trail created in your account, you can skip these steps. If not,

- Go to CloudTrail > Click on “Create Trail”.

- Enter an appropriate name for the trail.

- Click “Next”. Choose “Management events” in event type.

- Click “Next”. Review and click on “Create Trail”.

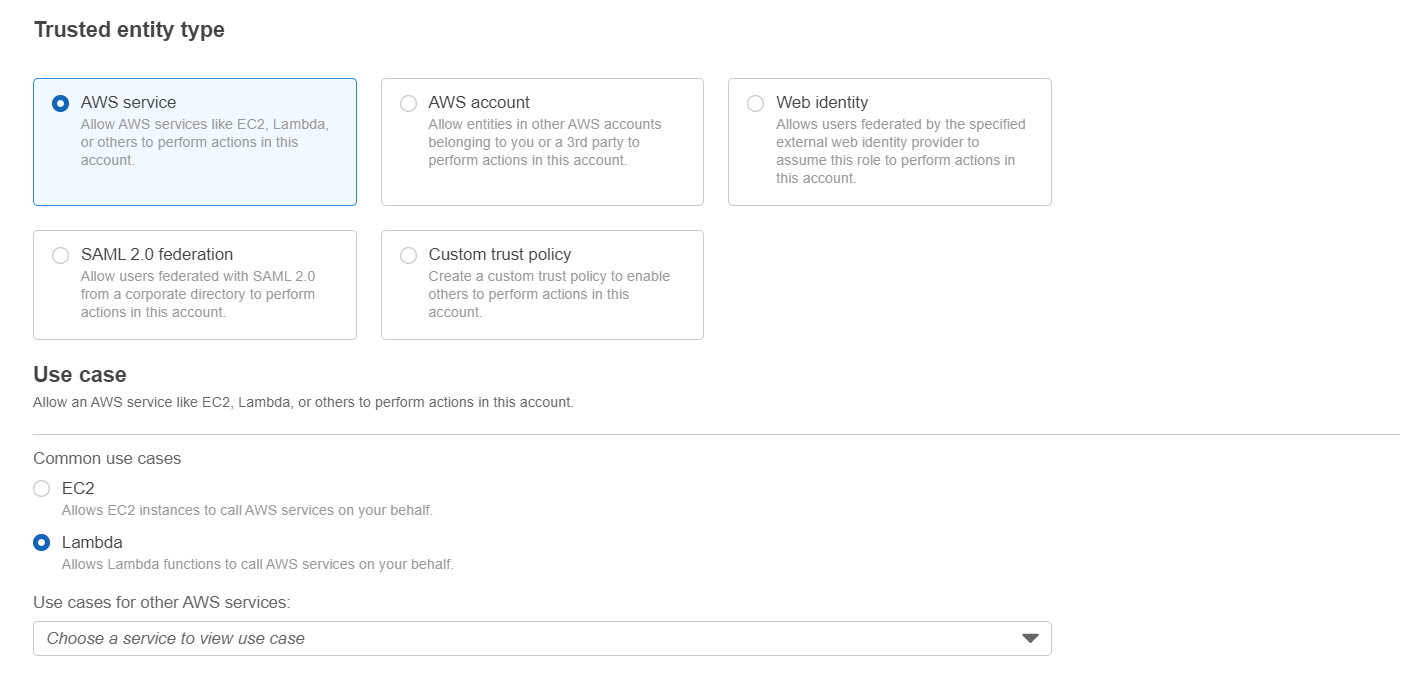

Step-2: Creating an IAM role for the Lambda Function

- Go to IAM and click on “Roles”. Inside “Roles” click on “Create Role”.

- Select “AWS Service” under Trusted entity type and for use case select “Lambda”. Click “Next”.

- Select appropriate policies to attach to the role. For this tutorial we have selected “AdministratorAccess”. Click “Next”.

- Provide the name with necessary details like Name, Description, Tags and Click “Create Role”.

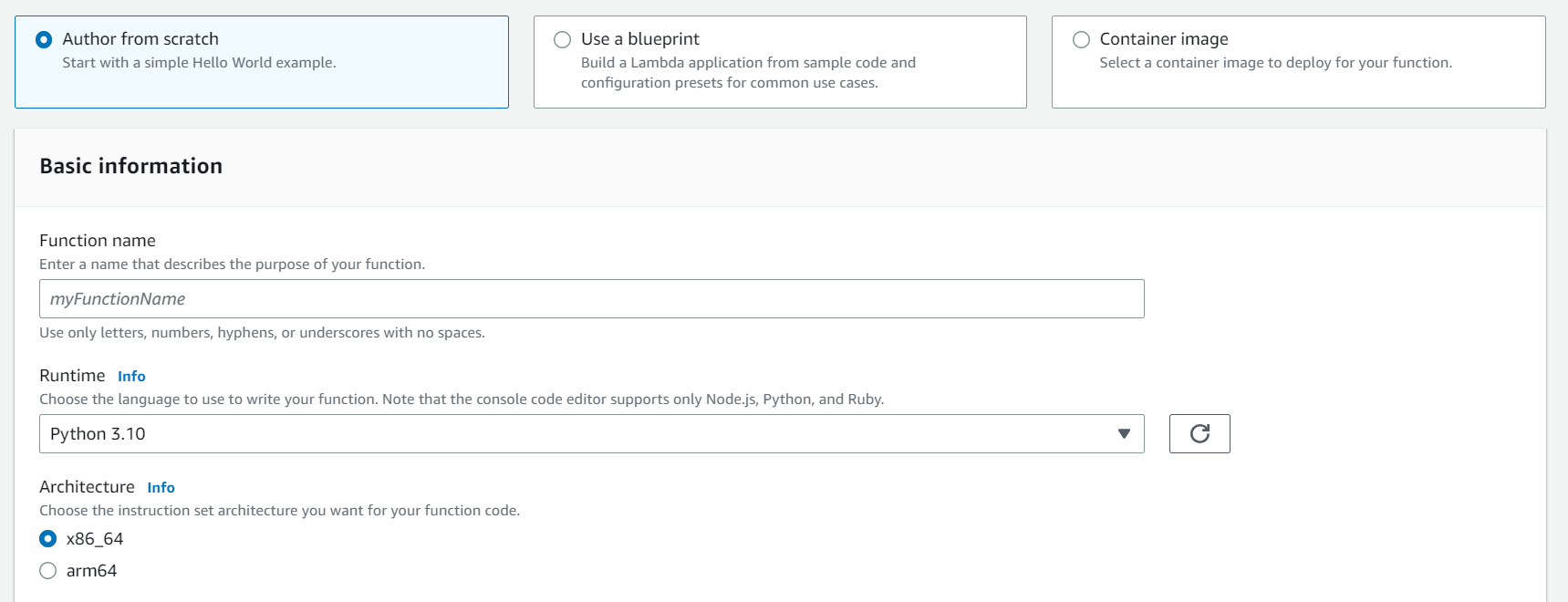

Step-3: Creating the Lambda Function

- Go to Lambda and click on “Create Function”.

- Select “Author from scratch”. Enter a suitable name for your Lambda Function and for Runtime select “Python 3.10”.

- Inside the permission section, expand “Change default execution role”, choose “Use an existing role” and from drop-down select the IAM role created in Step-2. Click on “Create Function”.

- Once Lambda Function is created, go to the “Code” tab and copy & paste the below code. The code uses boto3 to capture the event and extract relevant data. I have added appropriate comments to the code to help understand it.

import jsonimport boto3from datetime import datetimedef lambda_handler(event, context):ecs = boto3.client('ecs') # Initialize AWS ECS clienteks = boto3.client('eks') # Initialize AWS EKS client# For troubleshooting: Print the event object as a JSON stringprint(json.dumps(event))# Initialize lists to store resource ARNsecs_arns = [] # List to store ECS related resource Arnseks_arns = [] # List to store EKS related resource Arns# Extract relevant information from the eventdetail = event['detail']eventName = detail['eventName']eventSource = detail['eventSource']user_type = detail['userIdentity']['type']arn = detail['userIdentity']['arn']principal = detail['userIdentity']['principalId']# Get the current datecurrent_date = datetime.now().strftime("%m-%d-%Y")# Print relevant information for troubleshootingprint('Event Source: ' + eventSource)print('Event Name: ' + eventName)# Check if 'responseElements' are present in the event.if not detail['responseElements']:# In case response elements are unavailableprint("ResponseElement is missing. There could be an error that occurred.")if detail['errorCode']:print('Error Code: ' + detail['errorCode'])if detail['errorMessage']:print('Error Message: ' + detail['errorMessage'])return Falseelse:# Process the event based on the 'eventName' and 'eventSource'.if eventName == 'CreateCluster' and eventSource == 'ecs.amazonaws.com':ecs_arns.append(detail['responseElements']['cluster']['clusterArn'])print(ecs_arns)elif eventName == 'RegisterTaskDefinition':ecs_arns.append(detail['responseElements']['taskDefinition']['taskDefinitionArn'])print(ecs_arns)elif eventName == 'CreateService':ecs_arns.append(detail['responseElements']['service']['serviceArn'])print(ecs_arns)elif eventName == 'CreateNodegroup':eks_arns.append(detail['responseElements']['nodegroup']['nodegroupArn'])print(eks_arns)elif eventName == 'CreateCluster' and eventSource == 'eks.amazonaws.com':eks_arns.append(detail['responseElements']['cluster']['arn'])print(eks_arns)# Add tags to ECS Resourcesif ecs_arns:for arn in ecs_arns:ecs.tag_resource(resourceArn=arn, tags=[{'key': 'Owner', 'value': user},{'key': 'Date', 'value': current_date},])# Add tags to EKS Resourcesif eks_arns:for arn in eks_arns:eks.tag_resource(resourceArn=arn,tags={'Owner': user,'Date': current_date})return True - Once pasted. Click on “Deploy” to deploy the lambda function. Now we will need to create a trigger for this lambda function.

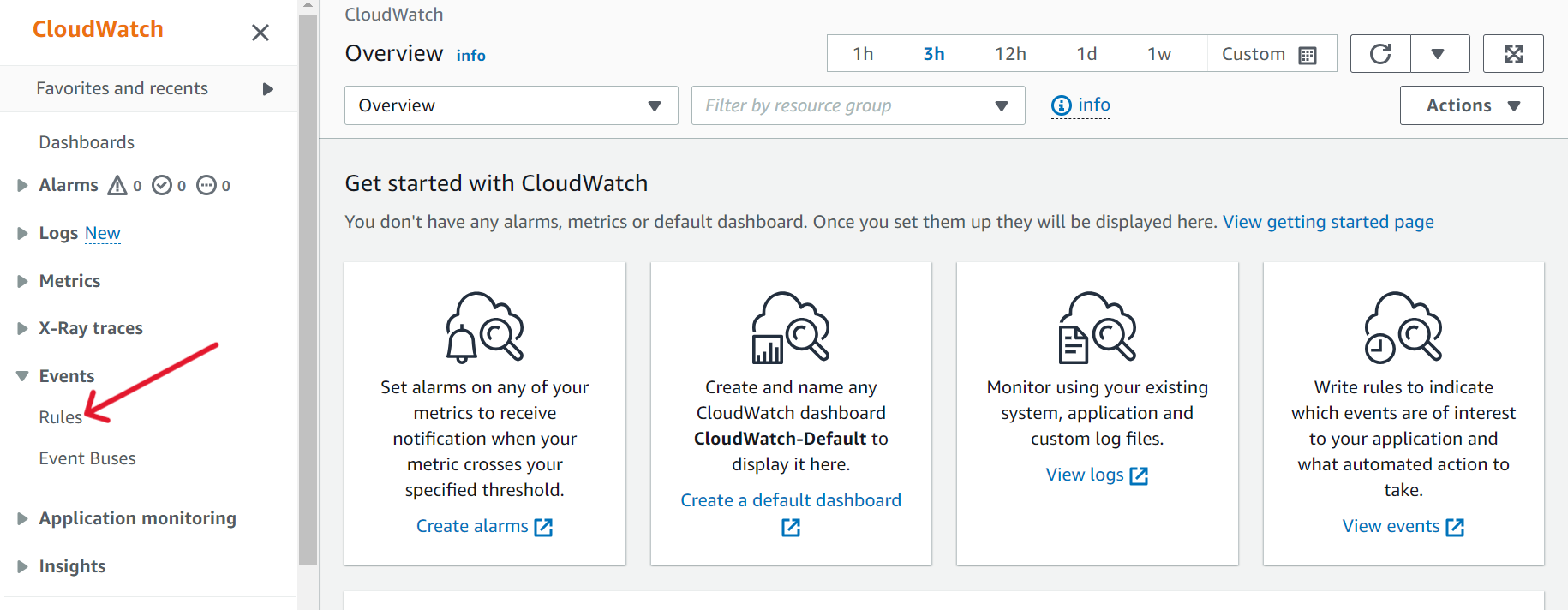

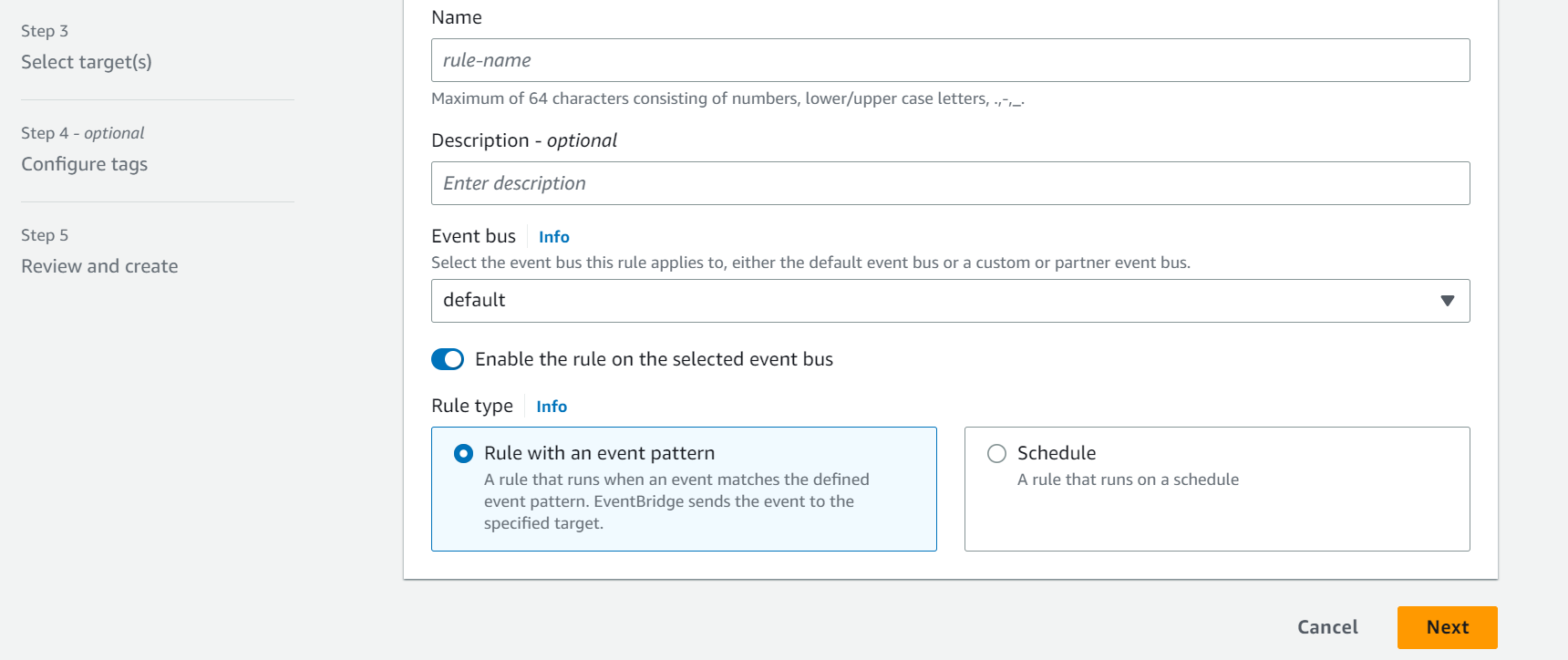

Step-4: Creating a CloudWatch Event Pattern to trigger the lambda function

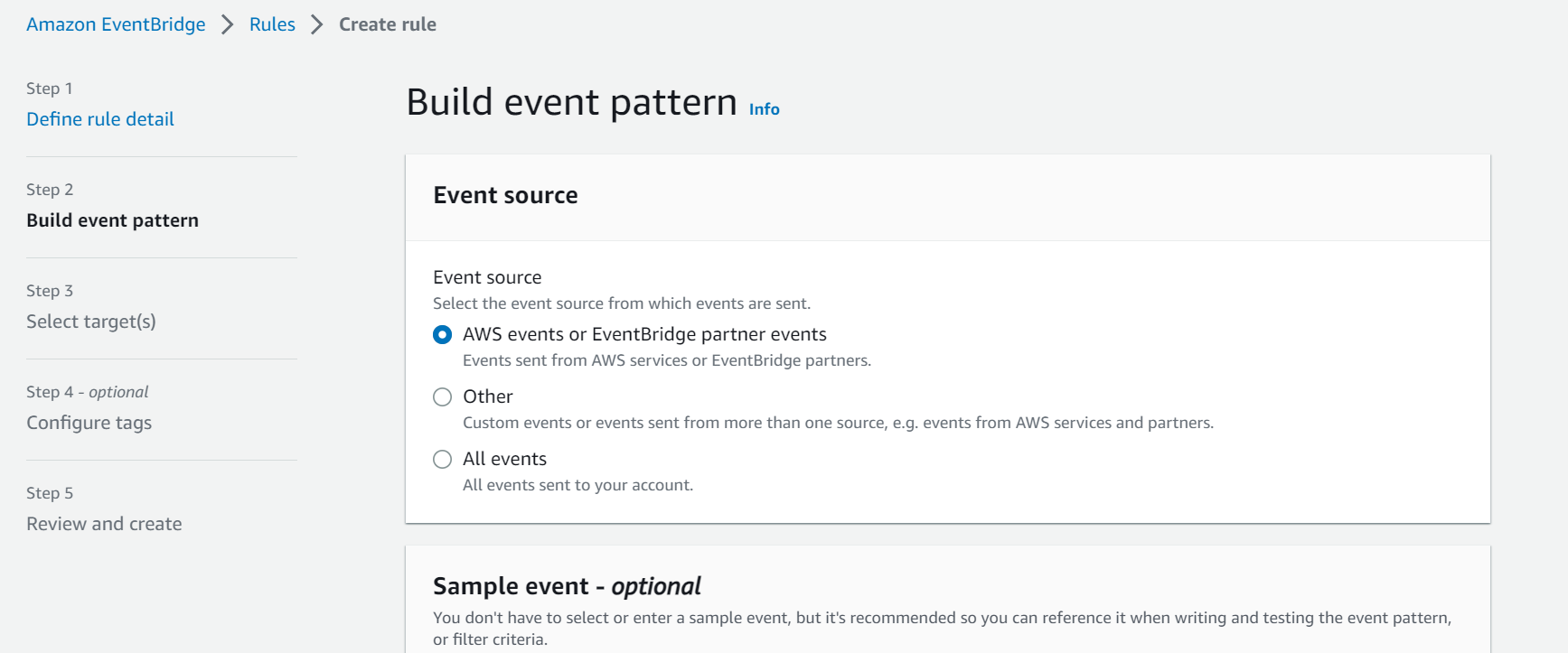

- Go to CloudWatch. In the left-side pane under Events > Click on “Rules”.

- Click on “Create Rule”. Enter an appropriate name for the rule. Under “Rule type”, select “Rule with an event pattern”. Click on “Next”.

- Select “AWS events or EventBridge partner events” under “Event Source”.

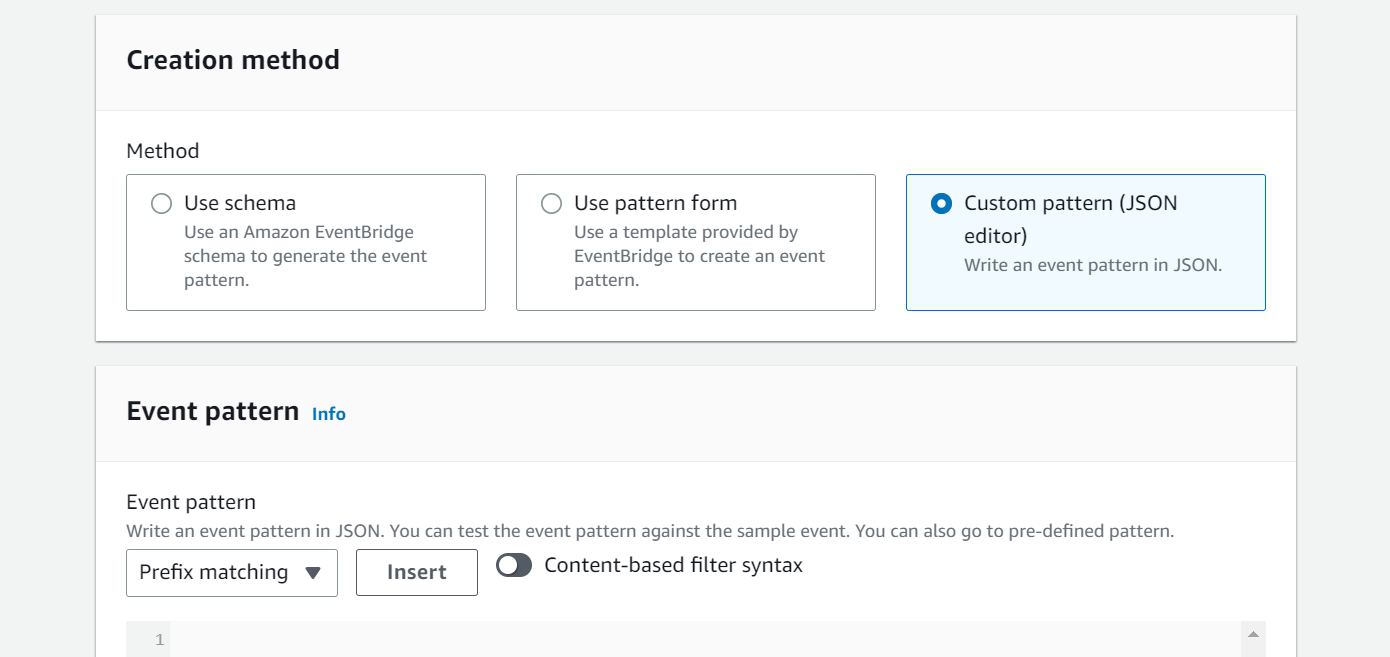

- Select a creation method based on your preference. For this tutorial we will be using “Custom pattern (JSON editor)”.

- Inside the editor, copy & paste the following JSON code. This will identify ECS & EKS creation event.

In the above code,

{"source": ["aws.ecs", "aws.eks"],"detail-type": ["AWS API Call via CloudTrail"],"detail":{"eventSource": ["ecs.amazonaws.com", "eks.amazonaws.com"],"eventName": ["CreateCluster", "RegisterTaskDefinition", "CreateService", "CreateNodegroup"]}}- "source": ["aws.ecs", "aws.eks"] indicates that the events being monitored and matched should originate from the AWS ECS & EKS service.

- "detail-type": ["AWS API Call via CloudTrail"] specifies that the events should be API calls logged through CloudTrail.

- "detail": {...} defines the specific details and conditions of the events to be matched:

- "eventSource": ["ecs.amazonaws.com", "eks.amazonaws.com"] filters events coming from the ECS & EKS API.

- "eventName": ["CreateCluster", "RegisterTaskDefinition", "CreateService", "CreateNodegroup"] specifies the specific API event names (such as CreateCluster, RegisterTaskDEfinition, CreateService, CreateNodeGroup) that will trigger the automated tagging process.

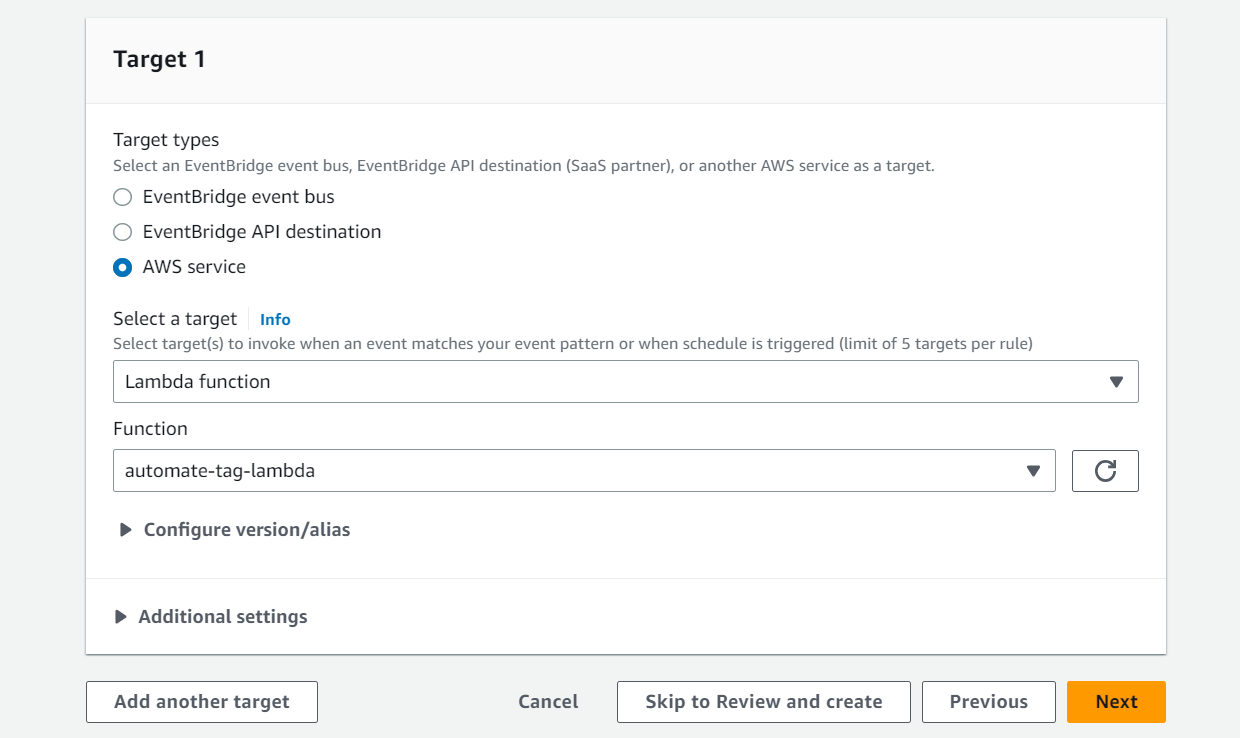

- Next, select “Lambda Function” as a target and select the previously created lambda function from the drop-down menu.

- Click “Next”. Provide necessary tags to the lambda function, review and click on “Create rule”.

Step-5: Testing and Validation

Now, by creating ECS/EKS clusters and services in the same region as your Lambda function, you can readily verify the appropriate tagging of resources. This validation step is crucial as it allows you to ensure that the tags you expect to be applied are visible on the ECS/EKS services. In case the tags are not visible, there are a few common errors that you might encounter. One possibility is that the Lambda function did not execute properly or encountered an error during the tagging process. Another potential issue could be misconfigured IAM permissions, preventing the Lambda function from accessing and tagging the resources. By carefully reviewing the execution logs and checking the IAM settings, you can troubleshoot and resolve any issues with the tagging process, thus ensuring accurate and consistent resource management.

Tagging resources globally and at scale

It's important to note that AWS Lambda functions are regional resources, meaning they are confined to a specific AWS region. Suppose you want to extend the automated tagging functionality to resources in different regions. In that case, you also need to create similar Lambda functions in those regions. However, manually creating Lambda functions in each region can be time-consuming and prone to errors.

Infrastructure as Code (IaC) tools like Terraform or CloudFormation Templates can be utilized to create multiple resources globally across multiple regions quickly and effortlessly. These tools allow you to define and provision your AWS resources, including Lambda functions, in a programmatic and reproducible manner. You can easily replicate the setup across multiple regions by defining the Lambda function and its associated resources in the IaC configuration file.

By appropriately editing the event pattern and Lambda code, you can extend the automation to tag any resources beyond ECS and EKS. You can customize the event pattern to trigger the Lambda function based on different API events or resource types, enabling you to automate tagging for a wide range of AWS resources. This flexibility ensures that your resource management remains consistent and efficient across your entire AWS environment.

Conclusion

In conclusion, automated tagging using AWS Lambda Function revolutionizes resource management within an AWS environment. Streamlining the tagging process and ensuring consistent practices eliminates manual errors, enhances resource visibility, and simplifies cost allocation. The flexibility of the Lambda Function allows for customization and scalability, enabling organizations to automate tagging for a wide range of resources at a low cost. With unparalleled efficiency and regulation, this game-changing approach optimizes operations, improves compliance, and empowers businesses to achieve effective resource management within their AWS environment.